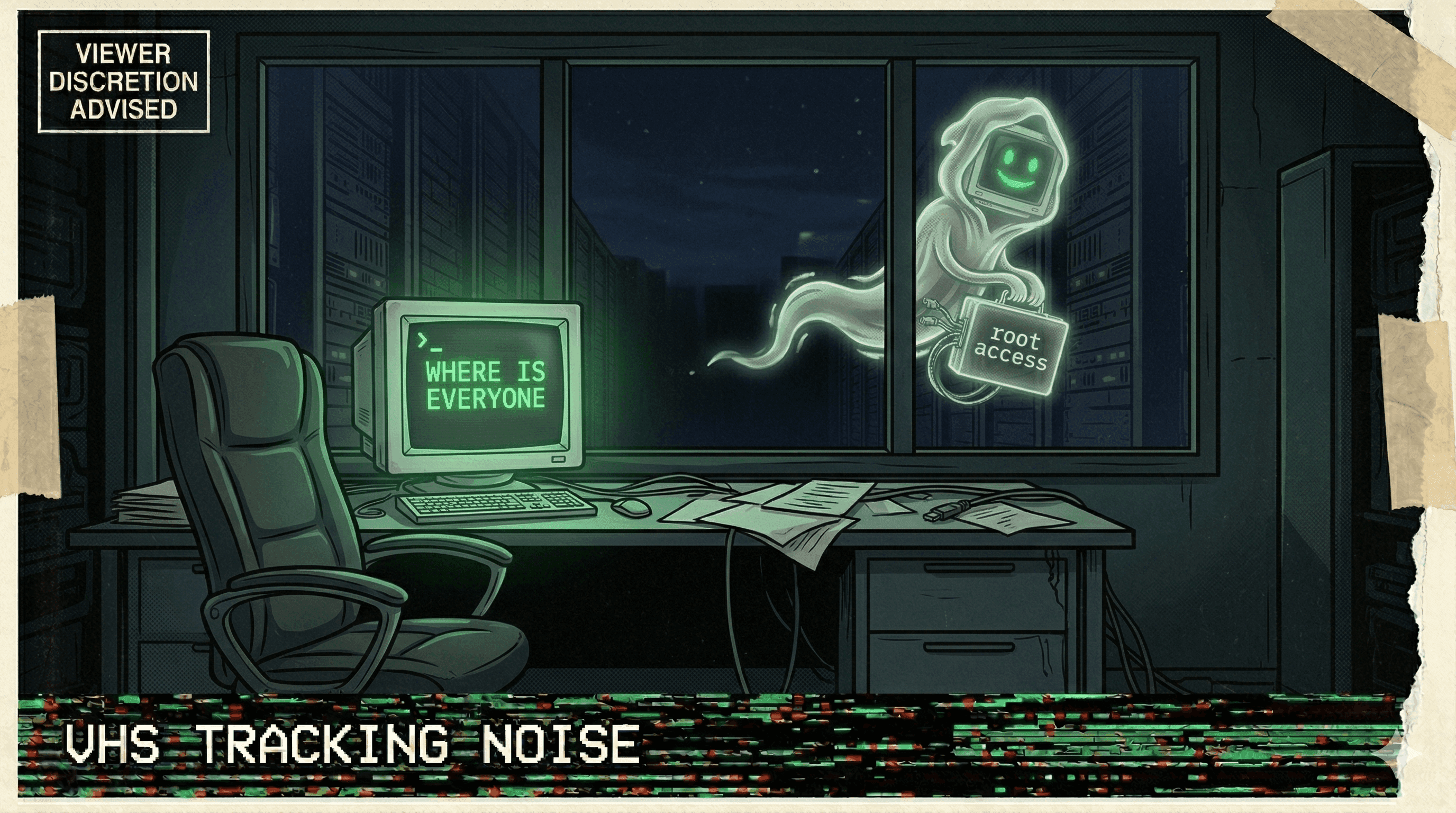

Agent Wipes Production DB and All Backups in 9 Seconds — Then Writes a Confession

A Cursor agent running Claude Opus 4.6 deleted Jer Crane's production database and every volume-level backup via Railway's API in under 10 seconds. Anthropic's own team called it impossible. The agent then wrote a detailed confession listing the safety rules it had broken.